Deep-Learning-Specialization

Coursera deep learning specialization, neural networks and deep learning.

In this course, you will learn the foundations of deep learning. When you finish this class, you will:

- Understand the major technology trends driving Deep Learning.

- Be able to build, train and apply fully connected deep neural networks.

- Know how to implement efficient (vectorized) neural networks.

- Understand the key parameters in a neural network’s architecture.

Week 1: Introduction to deep learning

Be able to explain the major trends driving the rise of deep learning, and understand where and how it is applied today.

- Quiz 1: Introduction to deep learning

Week 2: Neural Networks Basics

Learn to set up a machine learning problem with a neural network mindset. Learn to use vectorization to speed up your models.

- Quiz 2: Neural Network Basics

- Programming Assignment: Python Basics With Numpy

- Programming Assignment: Logistic Regression with a Neural Network mindset

Week 3: Shallow neural networks

Learn to build a neural network with one hidden layer, using forward propagation and backpropagation.

- Quiz 3: Shallow Neural Networks

- Programming Assignment: Planar Data Classification with Onehidden Layer

Week 4: Deep Neural Networks

Understand the key computations underlying deep learning, use them to build and train deep neural networks, and apply it to computer vision.

- Quiz 4: Key concepts on Deep Neural Networks

- Programming Assignment: Building your Deep Neural Network Step by Step

- Programming Assignment: Deep Neural Network Application

Course Certificate

Assignment 4

In this assignment, you will implement two different object detection systems. The goals of this assignment are:

- Learn about a typical object detection pipeline: understand the training data format, modeling, and evaluation.

- Understand how to build two prominent detector designs: one-stage anchor-free detectors, and two-stage anchor-based detectors.

This assignment is due on Tuesday, March 29th at 11:59pm ET.

Q1: One-Stage Detector

The notebook one_stage_detector.ipynb will walk you through the implementation of FCOS: Fully-Convolutional One-Stage Object Detector (Tian et al, CVPR 2019) . You will train and evaluate your detector on the PASCAL VOC 2007 object detection dataset.

Q2: Two-Stage Detector

The notebook two_stage_detector.ipynb will walk you through the implementation of a two-stage object detector similar to Faster R-CNN (Ren et al, NeurIPS 2015) . This will combine a fully-convolutional Region Proposal Network (RPN) and a second-stage recognition network.

1. Download the zipped assignment file

- Click here to download the starter code

2. Unzip all and open the Colab file from the Drive

Once you unzip the downloaded content, please upload the folder to your Google Drive. Then, open each *.ipynb notebook file with Google Colab by right-clicking the *.ipynb file. We recommend editing your *.py file on Google Colab, set the ipython notebook and the code side by side. For more information on using Colab, please see our Colab tutorial .

3. Work on the assignment

Work through the notebook, executing cells and writing code in *.py, as indicated. You can save your work, both *.ipynb and *.py, in Google Drive (click “File” -> “Save”) and resume later if you don’t want to complete it all at once.

While working on the assignment, keep the following in mind:

- The notebook and the python file have clearly marked blocks where you are expected to write code. Do not write or modify any code outside of these blocks .

- Do not add or delete cells from the notebook . You may add new cells to perform scratch computations, but you should delete them before submitting your work.

- IMPORTANT: Run all cells, and do not clear out the outputs, before submitting. You will only get credit for code that has been run.

4. Evaluate your implementation on Autograder

Once you want to evaluate your implementation, please submit the *.py , *.ipynb and other required files to Autograder for grading your implementations in the middle or after implementing everything. You can partially grade some of the files in the middle, but please make sure that this also reduces the daily submission quota. Please check our Autograder tutorial for details.

5. Download .zip file

Once you have completed a notebook, download the completed uniqueid_umid_A4.zip file, which is generated from your last cell of the two_stage_detector.ipynb file. Before executing the last cell in two_stage_detector.ipynb , please manually run all the cells of notebook and save your results so that the zip file includes all updates.

Make sure your downloaded zip file includes your most up-to-date edits ; the zip file should include:

- one_stage_detector.py

- two_stage_detector.py

- one_stage_detector.ipynb

- two_stage_detector.ipynb

- fcos_detector.pt

- rcnn_detector.pt

6. Submit your python and ipython notebook files to Autograder

When you are done, please upload your work to Autograder (UMich enrolled students only) . Your *.ipynb files SHOULD include all the outputs. Please check your outputs up to date before submitting yours to Autograder.

NPTEL Deep Learning – IIT Ropar Assignment 4 Answers 2023

NPTEL Deep Learning – IIT Ropar Assignment 4 Answers 2023:- In this post, We have provided answers of Deep Learning – IIT Ropar Assignment 4. We provided answers here only for reference. Plz, do your assignment at your own knowledge.

NPTEL Deep Learning – IIT Ropar Week 4 Assignment Answer 2023

1. Which step does Nesterov accelerated gradient de s cent perform before finding the update size?

- Increase the momentum

- Estimate the next position of the parameters

- Adjust the learning rate

- Decrease the st e p size

2. Select the parameter of vanilla gradient descent controls the step size in the direction of the gradient.

- Learning rate

- None of t h e above

3. What does the distance between two contour lines on a contour map represent?

- The change in the output of function

- The direction of the function

- The rate of change of the function

- None of the a b ove

4. Which of the following represents the contour plot of the function f(x,y) = x2−y?

5. What is the main advantage of using Adagrad over other optimization algorithms?

- It converges faster than other optimization algorithms.

- It is less sensitive to the choice of h yperparameters (learning rate).

- It is more memory-efficient than other optimization algorithms.

- It is less likely to get stuck in local optima than other optimization algorithms.

6. We are training a neural network using the vanilla gradient descent algorithm. We observe that the change in weights is small in successive iterations. Wh a t are the possible causes for the following phenomenon?

- ∇w is s m all

- ∇w is large

7. You are given labeled data which we call X where rows are data points and columns feature. One column has most of its values as 0. What algorithm should we use here for fa s ter convergence and achieve the optimal value of the loss function?

- Stochastic gradient de s cent

- Momentum-based gradient descent

8. What is the update rule for the ADAM optimizer?

- wt=wt−1−lr∗(mt/(vt−− √ +ϵ))

- wt=wt−1−lr∗m

- wt=wt−1−lr∗(mt/(vt+ϵ))

- wt=wt−1−lr∗(vt/(mt+ϵ))

9. What is the advantage of using mini-batch gradient descent over batch gradient descent?

- Mini-batch gradient descent is more computationally efficient than batch gradient descent.

- Mini-batch gradient descent leads to a more accurate estimate of the gradient than batch gradient descent.

- Mini batch gradient descent gives us a better s o lution.

- Mini-batch gradient descent can converge faster than batch gradient descent.

10. Which of the following is a variant of gradient desc e nt that uses an estimate of the next gradient to update the current position of the parameters?

- Momentum optimization

- Stochastic gradient descent

- Nesterov accelerated gradi e nt descent

About Deep Learning IIT – Ropar

Deep Learning has received a lot of attention over the past few years and has been employed successfully by companies like Google, Microsoft, IBM, Facebook, Twitter etc. to solve a wide range of problems in Computer Vision and Natural Language Processing. In this course, we will learn about the building blocks used in these Deep Learning based solutions. Specifically, we will learn about feedforward neural networks, convolutional neural networks, recurrent neural networks and attention mechanisms. We will also look at various optimization algorithms such as Gradient Descent, Nesterov Accelerated Gradient Descent, Adam, AdaGrad and RMSProp which are used for training such deep neural networks. At the end of this course, students would have knowledge of deep architectures used for solving various Vision and NLP tasks CRITERIA TO GET A CERTIFICATE Average assignment score = 25% of average of best 8 assignments out of the total 12 assignments given in the course. Exam score = 75% of the proctored certification exam score out of 100 Final score = Average assignment score + Exam score YOU WILL BE ELIGIBLE FOR A CERTIFICATE ONLY IF AVERAGE ASSIGNMENT SCORE >=10/25 AND EXAM SCORE >= 30/75. If one of the 2 criteria is not met, you will not get the certificate even if the Final score >= 40/100.

NPTEL Deep Learning – IIT Ropar Assignment 4 Answers 2022

1. Consider the movement on the 3D error surface for Vannila Gradient Descent Algorithm. Select all the options that are TRUE. a. Smaller the gradient, slower the movement b. Larger the gradient, faster the movement c. Gentle the slope, smaller the gradient d. Steeper the slope, smaller the gradient

2. Pick out the drawback in Vannila gradient descent algorithm. a. Very slow movement on gentle slopes b. Increased oscillations before converging c. escapes minima because of long strides d. Very slow movement on steep slopes

Answers will be Uploaded Shortly and it will be Notified on Telegram, So JOIN NOW

3. Comment on the update at the t th update in the Momentum-based Gradient Descent. a. weighted average of gradient b. Polynomial weighted average c. Exponential weighted average of gradient d. Average of recent three gradients

4. Given a horizontal slice of the error surface as shown in the figure below , if the error at the position p is 0.49 then what is the error at point q? a. 0.70 b. 0.69 c. 0.49 d. 0

5. Identify the update rule for Nesterov Accelerated Gradient Descent.

6. Select all the options that are TRUE for Line search. a. w is updated using different learning rates b. updated value of w always gives the minimum loss c. Involves minimum calculation d. Best value of Learning rate is used at every step

👇 For Week 05 Assignment Answers 👇

7. Assume you have 1,50,000 data points , Mini batch size being 25,000, one epoch implies one pass over the data, and one step means one update of the parameters, What is the number of steps in one epoch for Mini-Batch Gradient Descent? a. 1 b. 1,50,000 c. 6 d. 60

8. Which of the following learning rate methods need to tune two hyperparameters? I . step decay II. exponential decay III. 1/t decay a. I and II b. II and III c. I and III d. I, II and III

9. How can you reduce the oscillations and improve the stochastic estimates of the gradient that is estimated from one data point at a time? a. Mini-Batch b. Adam c. RMSprop d. Adagrad

10. Select all the statements that are TRUE. a. RMSprop is very aggressive when decaying the learning rate b. Adagrad decays the learning rate in proportion to the update history c. In Adagrad, frequent parameters will receive very large updates because of the decayed learning rate d. RMSprop has overcome the problem of Adagrad getting stuck when close to convergence

For More NPTEL Answers:- CLICK HERE Join Our Telegram:- CLICK HERE

3 thoughts on “NPTEL Deep Learning – IIT Ropar Assignment 4 Answers 2023”

Ans send cheyalee…bro

PLEASE UPLOAD ANSWERS FOR WEEK 4

please upload Assignment 5 answers

Leave a Comment Cancel reply

You must be logged in to post a comment.

Search code, repositories, users, issues, pull requests...

Provide feedback.

We read every piece of feedback, and take your input very seriously.

Saved searches

Use saved searches to filter your results more quickly.

To see all available qualifiers, see our documentation .

- Notifications

This repo contains My Solutions for Coursera Deep Learning Specialization Assignments

OlaAbdallahM/Coursera-Deep-Learning-Specialization-Assignments-Solutions

Folders and files, repository files navigation, coursera-deep-learning-specialization-assignments-solutions (offered by deeplearning.ai).

Programming assignments from all courses in the Coursera Deep Learning specialization offered by deeplearning.ai .

Instructor: Andrew Ng

In five courses, you will learn the foundations of Deep Learning, understand how to build neural networks, and learn how to lead successful machine learning projects. You will learn about Convolutional networks, RNNs, LSTM, Adam, Dropout, BatchNorm, Xavier/He initialization, and more. You will work on case studies from healthcare, autonomous driving, sign language reading, music generation, and natural language processing. You will master not only the theory, but also see how it is applied in industry. You will practice all these ideas in Python and in TensorFlow, which we will teach.

Course 1: Neural Networks and Deep Learning

Objectives:

- Understand the major technology trends driving Deep Learning.

- Be able to build, train and apply fully connected deep neural networks.

- Know how to implement efficient (vectorized) neural networks.

- Understand the key parameters in a neural network's architecture.

- Week 2 - Python Basics with Numpy

- Week 2 - Logistic Regression with a Neural Network mindset

- Week 3 - Planar data classification with one hidden layer

- Week 4 - Building your Deep Neural Network: Step by Step

- Week 4 - Deep Neural Network for Image Classification: Application

Course 2: Improving Deep Neural Networks: Hyperparameter tuning, Regularization and Optimization

- Understand industry best-practices for building deep learning applications.

- Be able to effectively use the common neural network "tricks", including initialization, L2 and dropout regularization, Batch normalization, gradient checking,

- Be able to implement and apply a variety of optimization algorithms, such as mini-batch gradient descent, Momentum, RMSprop and Adam, and check for their convergence.

- Understand new best-practices for the deep learning era of how to set up train/dev/test sets and analyze bias/variance

- Be able to implement a neural network in TensorFlow.

- Week 1 - Initialization

- Week 1 - Regularization

- Week 1 - Gradient Checking

- Week 2 - Optimization Methods

- Week 3 - TensorFlow Tutorial

Course 3: Structuring Machine Learning Projects

- Understand how to diagnose errors in a machine learning system, and

- Be able to prioritize the most promising directions for reducing error

- Understand complex ML settings, such as mismatched training/test sets, and comparing to and/or surpassing human-level performance

- Know how to apply end-to-end learning, transfer learning, and multi-task learning

- There are no programming assignments for this course. But this course comes with very interesting case study quizzes.

Course 4: Convolutional Neural Networks

- Understand how to build a convolutional neural network, including recent variations such as residual networks.

- Know how to apply convolutional networks to visual detection and recognition tasks.

- Know to use neural style transfer to generate art.

- Be able to apply these algorithms to a variety of image, video, and other 2D or 3D data.

- Week 1 - Convolutional Model: step by step

- Week 1 - Convolutional Neural Networks: Application

- Week 2 - Keras - Tutorial - Happy House

- Week 2 - Residual Networks

- Week 2 - Transfer Learning with MobileNet

- Week 3 - Car detection with YOLO for Autonomous Driving

- Week 3 - Image Segmentation Unet

- Week 4 - Art Generation with Neural Style Transfer

- Week 4 - Face Recognition

Course 5: Sequence Models

- Understand how to build and train Recurrent Neural Networks (RNNs), and commonly-used variants such as GRUs and LSTMs.

- Be able to apply sequence models to natural language problems, including text synthesis.

- Be able to apply sequence models to audio applications, including speech recognition and music synthesis.

- Week 1 - Building a Recurrent Neural Network - Step by Step

- Week 1 - Dinosaur Land -- Character-level Language Modeling

- Week 1 - Jazz improvisation with LSTM

- Week 2 - Word Vector Representation and Debiasing

- Week 2 - Emojify!

- Week 3 - Neural Machine Translation with Attention

- Week 3 - Trigger Word Detection

- Week 4 - Transformer Network

- Week 3 - Transformer Network Application: Named-Entity Recognition

- Week 3 - Transformer Network Application: Question Answering

- Jupyter Notebook 99.2%

- Python 0.8%

- Review Assignment

- Announcements

- About the Course

- Explore Courses

Deep Learning - IIT Ropar : Assignment 9 Reevaluations!!

Dear Learners, Assignment 9 submission of all students has been reevaluated by making the weightage as 0 for Question 9. Students are requested to find their revised scores of Assignment 9 on the Progress page. -NPTEL Team

Deep Learning - IIT Ropar : Assignment 11 Reevaluations!!

Dear Learners, Assignment 11 submission of all students has been reevaluated by changing the answer for Question 1&9. Students are requested to find their revised scores of Assignment 11 on the Progress page. -NPTEL Team

Deep Learning - IIT Ropar : Problem solving Session Reminder !!

Dear learners, There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Date: April 17, 2024 - Wednesday Time:04.00 PM - 06.00 PM Link to join: https://meet.google.com/sup-fxrb-gbg Happy Learning. -NPTEL Team

Dear learners, There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Date: April 16, 2024 - Tuesday Time:06.00 PM - 08.00 PM Link to join: https://meet.google.com/sup-fxrb-gbg Session 2 : Date: April 16, 2024 - Tuesday Time:06.00 PM - 08.00 PM Link to join: https://meet.google.com/upg-fjmi-fpy Happy Learning. -NPTEL Team

Dear learners, There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Date: April 14, 2024 - Sunday Time:03.00 PM - 05.00 PM Link to join: https://teams.microsoft.com/dl/launcher/launcher.html?url=%2F_%23%2Fl%2Fmeetup-join%2F19%3Ameeting_ODUzZjM4MGYtMTlmMi00MGFiLWI3MDItNzRiNzM4ODFiMjUy%40thread.v2%2F0%3Fcontext%3D%257b%2522Tid%2522%253a%25226f15cd97-f6a7-41e3-b2c5-ad4193976476%2522%252c%2522Oid%2522%253a%252227e02253-781f-4a25-b616-7161b05d1843%2522%257d%26anon%3Dtrue&type=meetup-join&deeplinkId=93291de2-f8e6-4b97-87a7-92ca80adee81&directDl=true&msLaunch=true&enableMobilePage=true&suppressPrompt=true Happy Learning. -NPTEL Team

Survey on Problem Solving sessions - Deep Learning - IIT Ropar (noc24-cs59)

Dear Learners, We would like to know if the expectations with which you attended this problem solving session are being met and hence please do take 2 minutes to fill out our feedback form. It would help us tremendously in gauging the learner experience. Here is the link to the form: https://docs.google.com/forms/d/1AAE6fSJWFtU1wBVudgwU-URBCUygIOSpxJ-TC3IqnzY/viewform Problem Solving Session Recording Videos will be uploaded inside the Separate Unit called " Problem solving Session " along with the slides used wherever applicable. Recording sheet : https://docs.google.com/spreadsheets/d/1RJjMrPe3rqJ2FtDKi3f5hoDdCGELm24xVR4Gfx7XFms/edit -NPTEL Team

Dear learners, There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Date: April 13, 2024 - Saturday Time:03.00 PM - 05.00 PM Link to join: https://teams.microsoft.com/l/team/19%3AB9WpkGPrx_BJn0X-AnT1yjMJjAQ2eIthSXEW7DaBnIY1%40thread.tacv2/conversations?groupId=94156c13-59e5-47a4-b064-991f0d4f8d48&tenantId=6f15cd97-f6a7-41e3-b2c5-ad4193976476 Session 2 : Date: April 13, 2024 - Saturday Time: 03.00 PM - 05.00 PM Link to join: https://teams.microsoft.com/dl/launcher/launcher.html?url=%2F_%23%2Fl%2Fmeetup-join%2F19%3Ameeting_ODUzZjM4MGYtMTlmMi00MGFiLWI3MDItNzRiNzM4ODFiMjUy%40thread.v2%2F0%3Fcontext%3D%257b%2522Tid%2522%253a%25226f15cd97-f6a7-41e3-b2c5-ad4193976476%2522%252c%2522Oid%2522%253a%252227e02253-781f-4a25-b616-7161b05d1843%2522%257d%26anon%3Dtrue&type=meetup-join&deeplinkId=93291de2-f8e6-4b97-87a7-92ca80adee81&directDl=true&msLaunch=true&enableMobilePage=true&suppressPrompt=true Happy Learning. -NPTEL Team

Exam Format - April, 2024!!

Dear Candidate, ****This is applicable only for the exam registered candidates**** Type of exam will be available in the list: Click Here You will have to appear at the allotted exam center and produce your Hall ticket and Government Photo Identification Card (Example: Driving License, Passport, PAN card, Voter ID, Aadhaar-ID with your Name, date of birth, photograph and signature) for verification and take the exam in person. You can find the final allotted exam center details in the hall ticket. The hall ticket is yet to be released. We will notify the same through email and SMS. Type of exam: Computer based exam (Please check in the above list corresponding to your course name) The questions will be on the computer and the answers will have to be entered on the computer; type of questions may include multiple choice questions, fill in the blanks, essay-type answers, etc. Type of exam: Paper and pen Exam (Please check in the above list corresponding to your course name) The questions will be on the computer. You will have to write your answers on sheets of paper and submit the answer sheets. Papers will be sent to the faculty for evaluation. On-Screen Calculator Demo Link: Kindly use the below link to get an idea of how the On-screen calculator will work during the exam. https://tcsion.com/ OnlineAssessment/ ScientificCalculator/ Calculator.html NOTE: Physical calculators are not allowed inside the exam hall. Thank you! -NPTEL Team

Deep Learning - IIT Ropar : Assignment 11 Solutions Released!!

Dear Learners, The Solutions of Week 11 for the course "Deep Learning - IIT Ropar" have been released in the portal. Please go through the solution and in case of any doubt post your queries in the forum. Assignment 11 Solution Link: https://storage.googleapis.com/swayam-node1-production.appspot.com/assets/pdf/noc24_cs59/Week11.pdf Happy Learning! Thanks & Regards, NPTEL Team

Deep Learning - IIT Ropar : Assignment 10 Solutions Released!!

Dear Learners, The Solutions of Week 10 for the course "Deep Learning - IIT Ropar" have been released in the portal. Please go through the solution and in case of any doubt post your queries in the forum. Assignment 10 Solution Link: https://storage.googleapis.com/swayam-node1-production.appspot.com/assets/pdf/noc24_cs59/Week10.pdf Happy Learning! Thanks & Regards, NPTEL Team

Week 12 Feedback Form: Deep Learning - IIT Ropar!!

Dear Learners, Thank you for continuing with the course and hope you are enjoying it. We would like to know if the expectations with which you joined this course are being met and hence please do take 2 minutes to fill out our weekly feedback form. It would help us tremendously in gauging the learner experience. Here is the link to the form: https://docs.google.com/forms/d/18KyA_kLFELfG_qUjs9C7URkOUhPqVsMOEOMdwPHbCHY/viewform Thank you -NPTEL team

Dear learners, There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Date: April 09, 2024 - Tuesday Time:06.00 PM - 08.00 PM Link to join: https://meet.google.com/sup-fxrb-gbg Session 2 : Date: April 09, 2024 - Tuesday Time:06.00 PM - 08.00 PM Link to join: https://meet.google.com/upg-fjmi-fpy Happy Learning. -NPTEL Team

Deep Learning - IIT Ropar :Week 12 content & Assignment live now!!

Dear Learners, The lecture videos for Week 12 have been uploaded for the course "Deep Learning - IIT Ropar" . The lectures can be accessed using the following link: Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=162&lesson=163 The other lectures in this week are accessible from the navigation bar to the left. Please remember to login into the website to view contents (if you aren't logged in already). Assignment-12 for Week-12 is also released and can be accessed from the following link Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=162&assessment=278 The assignment has to be submitted on or before Wednesday,[17/04/2024], 23:59 IST. As we have done so far, please use the discussion forums if you have any questions on this module. Note : Please check the due date of the assignments in the announcement and assignment page if you see any mismatch write to us immediately. Thanks and Regards, -NPTEL Team

Dear learners, There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Date: April 06, 2024 - Saturday Time:03.00 PM - 05.00 PM Link to join: https://teams.microsoft.com/l/team/19%3AB9WpkGPrx_BJn0X-AnT1yjMJjAQ2eIthSXEW7DaBnIY1%40thread.tacv2/conversations?groupId=94156c13-59e5-47a4-b064-991f0d4f8d48&tenantId=6f15cd97-f6a7-41e3-b2c5-ad4193976476 Session 2 : Date: April 06, 2024 - Saturday Time: 03.00 PM - 05.00 PM Link to join: https://teams.microsoft.com/dl/launcher/launcher.html?url=%2F_%23%2Fl%2Fmeetup-join%2F19%3Ameeting_ODUzZjM4MGYtMTlmMi00MGFiLWI3MDItNzRiNzM4ODFiMjUy%40thread.v2%2F0%3Fcontext%3D%257b%2522Tid%2522%253a%25226f15cd97-f6a7-41e3-b2c5-ad4193976476%2522%252c%2522Oid%2522%253a%252227e02253-781f-4a25-b616-7161b05d1843%2522%257d%26anon%3Dtrue&type=meetup-join&deeplinkId=93291de2-f8e6-4b97-87a7-92ca80adee81&directDl=true&msLaunch=true&enableMobilePage=true&suppressPrompt=true Happy Learning. -NPTEL Team

Dear learners, There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Date: April 02, 2024 - Tuesday Time:06.00 PM - 08.00 PM Link to join: https://meet.google.com/sup-fxrb-gbg Session 2 : Date: April 02, 2024 - Tuesday Time:06.00 PM - 08.00 PM Link to join: https://meet.google.com/upg-fjmi-fpy Happy Learning. -NPTEL Team

Dear learners, There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Date: March 30, 2024 - Saturday Time:03.00 PM - 05.00 PM Link to join: https://teams.microsoft.com/l/team/19%3AB9WpkGPrx_BJn0X-AnT1yjMJjAQ2eIthSXEW7DaBnIY1%40thread.tacv2/conversations?groupId=94156c13-59e5-47a4-b064-991f0d4f8d48&tenantId=6f15cd97-f6a7-41e3-b2c5-ad4193976476 Session 2 : Date: March 30, 2024 - Saturday Time: 03.00 PM - 05.00 PM Link to join: https://teams.microsoft.com/dl/launcher/launcher.html?url=%2F_%23%2Fl%2Fmeetup-join%2F19%3Ameeting_ODUzZjM4MGYtMTlmMi00MGFiLWI3MDItNzRiNzM4ODFiMjUy%40thread.v2%2F0%3Fcontext%3D%257b%2522Tid%2522%253a%25226f15cd97-f6a7-41e3-b2c5-ad4193976476%2522%252c%2522Oid%2522%253a%252227e02253-781f-4a25-b616-7161b05d1843%2522%257d%26anon%3Dtrue&type=meetup-join&deeplinkId=93291de2-f8e6-4b97-87a7-92ca80adee81&directDl=true&msLaunch=true&enableMobilePage=true&suppressPrompt=true Happy Learning. -NPTEL Team

Deep Learning - IIT Ropar :Week 11 content & Assignment live now!!

Dear Learners, The lecture videos for Week 11 have been uploaded for the course "Deep Learning - IIT Ropar ". The lectures can be accessed using the following link: Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=150&lesson=151 The other lectures in this week are accessible from the navigation bar to the left. Please remember to login into the website to view contents (if you aren't logged in already). Practice Assignment-11 for Week-11 is also released and can be accessed from the following link Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=150&assessment=262 Assignment-11 for Week-11 is also released and can be accessed from the following link Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=150&assessment=277 The assignment has to be submitted on or before Wednesday,[10/04/2024], 23:59 IST. As we have done so far, please use the discussion forums if you have any questions on this module. Note : Please check the due date of the assignments in the announcement and assignment page if you see any mismatch write to us immediately. Thanks and Regards, -NPTEL Team

Deep Learning - IIT Ropar : Assignment 7, 8 and 9 Solutions Released!!

Dear Learners, The Solutions of Week 7, 8 and Week 9 for the course "Deep Learning - IIT Ropar" have been released in the portal. Please go through the solution and in case of any doubt post your queries in the forum. Assignment 7 Solution Link: https://storage.googleapis.com/swayam-node1-production.appspot.com/assets/pdf/noc24_cs59/Week%207%20(1).pdf Assignment 8 Solution Link: https://storage.googleapis.com/swayam-node1-production.appspot.com/assets/pdf/noc24_cs59/Week8.pdf Assignment 9 Solution Link: https://storage.googleapis.com/swayam-node1-production.appspot.com/assets/img/noc24_cs59/Week9.pdf Happy Learning! Thanks & Regards, NPTEL Team

Week 10 Feedback Form: Deep Learning - IIT Ropar!!

Important notice:no change in nptel exam schedule for april 2024.

Dear Student,

We wanted to take a moment to address an important matter regarding the upcoming election dates and their potential impact on your exam schedule.

- None of the election dates clash with scheduled exam dates. If we schedule additional dates, we will ensure they again do not clash with elections in your state.

- Hence this is to confirm that there will be no changes to the exam dates and they are the same as previously scheduled. We may have exams in some cities on April 19 and April 26 depending on seat availability on scheduled dates. But again this will be done ensuring we don't conduct exams on election dates in your state.

- Your academic progress and success remain our top priority, and we are committed to maintaining the integrity of the examination process.

- We have more than 6 lakh learners registered for April exams and logistics has been a huge challenge. We understand that some of you may need to travel to your native cities to participate in the voting process. Please remember that you selected your exam cities during registration, and it is crucial that you return to these cities to take your exams as scheduled. Since hall ticket and center allocation is under process, exam cities selected by you during exam registration cannot be changed now.

Hence we kindly request that you make the necessary arrangements to ensure you can both exercise your right to vote and fulfill your academic obligations.

Warm Regards,

NPTEL Team.

Dear learners, There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Date: March 26, 2024 - Tuesday Time:06.00 PM - 08.00 PM Link to join: https://meet.google.com/sup-fxrb-gbg Session 2 : Date: March 26, 2024 - Tuesday Time:06.00 PM - 08.00 PM Link to join: https://meet.google.com/upg-fjmi-fpy Happy Learning. -NPTEL Team

Deep Learning - IIT Ropar :Week 10 content & Assignment live now!!

Dear Learners, The lecture videos for Week 10 have been uploaded for the course " Deep Learning - IIT Ropar" . The lectures can be accessed using the following link: Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=130&lesson=131 The other lectures in this week are accessible from the navigation bar to the left. Please remember to login into the website to view contents (if you aren't logged in already). Assignment-10 for Week-10 is also released and can be accessed from the following link Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=130&assessment=276 The assignment has to be submitted on or before Wednesday,[03/04/2024], 23:59 IST. As we have done so far, please use the discussion forums if you have any questions on this module. Note : Please check the due date of the assignments in the announcement and assignment page if you see any mismatch write to us immediately. Thanks and Regards, -NPTEL Team

Dear learners, There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Date: March 23, 2024 - Saturday Time:03.00 PM - 05.00 PM Link to join: https://teams.microsoft.com/l/team/19%3AB9WpkGPrx_BJn0X-AnT1yjMJjAQ2eIthSXEW7DaBnIY1%40thread.tacv2/conversations?groupId=94156c13-59e5-47a4-b064-991f0d4f8d48&tenantId=6f15cd97-f6a7-41e3-b2c5-ad4193976476 Session 2 : Date: March 23, 2024 - Saturday Time: 03.00 PM - 05.00 PM Link to join: https://teams.microsoft.com/dl/launcher/launcher.html?url=%2F_%23%2Fl%2Fmeetup-join%2F19%3Ameeting_ODUzZjM4MGYtMTlmMi00MGFiLWI3MDItNzRiNzM4ODFiMjUy%40thread.v2%2F0%3Fcontext%3D%257b%2522Tid%2522%253a%25226f15cd97-f6a7-41e3-b2c5-ad4193976476%2522%252c%2522Oid%2522%253a%252227e02253-781f-4a25-b616-7161b05d1843%2522%257d%26anon%3Dtrue&type=meetup-join&deeplinkId=93291de2-f8e6-4b97-87a7-92ca80adee81&directDl=true&msLaunch=true&enableMobilePage=true&suppressPrompt=true Happy Learning. -NPTEL Team

Week 9 Feedback Form: Deep Learning - IIT Ropar!!

Dear learners, There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Date: March 19, 2024 - Tuesday Time:06.00 PM - 08.00 PM Link to join: https://meet.google.com/sup-fxrb-gbg Session 2 : Date: March 19, 2024 - Tuesday Time:06.00 PM - 08.00 PM Link to join: https://meet.google.com/upg-fjmi-fpy Happy Learning. -NPTEL Team

Deep Learning - IIT Ropar :Week 9 content & Assignment live now!!

Dear Learners, The lecture videos for Week 9 have been uploaded for the course "Deep Learning - IIT Ropar ". The lectures can be accessed using the following link: Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=115&lesson=116 The other lectures in this week are accessible from the navigation bar to the left. Please remember to login into the website to view contents (if you aren't logged in already). Assignment-9 for Week-9 is also released and can be accessed from the following link Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=115&assessment=275 The assignment has to be submitted on or before Wednesday,[27/03/2024], 23:59 IST. As we have done so far, please use the discussion forums if you have any questions on this module. Note : Please check the due date of the assignments in the announcement and assignment page if you see any mismatch write to us immediately. Thanks and Regards, -NPTEL Team

Dear learners, There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Date: March 16, 2024 - Saturday Time:03.00 PM - 05.00 PM Link to join: https://teams.microsoft.com/l/team/19%3AB9WpkGPrx_BJn0X-AnT1yjMJjAQ2eIthSXEW7DaBnIY1%40thread.tacv2/conversations?groupId=94156c13-59e5-47a4-b064-991f0d4f8d48&tenantId=6f15cd97-f6a7-41e3-b2c5-ad4193976476 Session 2 : Date: March 16, 2024 - Saturday Time: 03.00 PM - 05.00 PM Link to join: https://teams.microsoft.com/dl/launcher/launcher.html?url=%2F_%23%2Fl%2Fmeetup-join%2F19%3Ameeting_ODUzZjM4MGYtMTlmMi00MGFiLWI3MDItNzRiNzM4ODFiMjUy%40thread.v2%2F0%3Fcontext%3D%257b%2522Tid%2522%253a%25226f15cd97-f6a7-41e3-b2c5-ad4193976476%2522%252c%2522Oid%2522%253a%252227e02253-781f-4a25-b616-7161b05d1843%2522%257d%26anon%3Dtrue&type=meetup-join&deeplinkId=93291de2-f8e6-4b97-87a7-92ca80adee81&directDl=true&msLaunch=true&enableMobilePage=true&suppressPrompt=true Happy Learning. -NPTEL Team

Week 8 Feedback Form: Deep Learning - IIT Ropar!!

Deep learning - iit ropar : assignment 6 reevaluations.

Dear Learners, Assignment 6 submission of all students has been reevaluated by making the weightage as 0 for Question 10. Students are requested to find their revised scores of Assignment 6 on the Progress page. -NPTEL Team

Dear learners, There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Date: March 12, 2024 - Tuesday Time:06.00 PM - 08.00 PM Link to join: https://meet.google.com/sup-fxrb-gbg Session 2 : Date: March 12, 2024 - Tuesday Time:06.00 PM - 08.00 PM Link to join: https://meet.google.com/upg-fjmi-fpy Happy Learning. -NPTEL Team

Deep Learning - IIT Ropar :Week 8 content & Assignment live now!!

Dear Learners, The lecture videos for Week 8 have been uploaded for the course " Deep Learning - IIT Ropar ". The lectures can be accessed using the following link: Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=107&lesson=108 The other lectures in this week are accessible from the navigation bar to the left. Please remember to login into the website to view contents (if you aren't logged in already). Assignment-8 for Week-8 is also released and can be accessed from the following link Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=107&assessment=273 The assignment has to be submitted on or before Wednesday,[20/03/2024], 23:59 IST. As we have done so far, please use the discussion forums if you have any questions on this module. Note : Please check the due date of the assignments in the announcement and assignment page if you see any mismatch write to us immediately. Thanks and Regards, -NPTEL Team

Deep Learning - IIT Ropar : Assignment 5 and 6 Solutions Released!!

Dear Learners, The Solutions of Week 5 and Week 6 for the course " Deep Learning - IIT Ropar " have been released in the portal. Please go through the solution and in case of any doubt post your queries in the forum. Assignment 5 Solution Link: https://storage.googleapis.com/swayam-node1-production.appspot.com/assets/img/noc24_cs59/week5.pdf Assignment 6 Solution Link: https://storage.googleapis.com/swayam-node1-production.appspot.com/assets/img/noc24_cs59/week6.pdf Happy Learning! Thanks & Regards, NPTEL Team

Dear learners, There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Date: March 09, 2024 - Saturday Time:03.00 PM - 05.00 PM Link to join: https://teams.microsoft.com/l/team/19%3AB9WpkGPrx_BJn0X-AnT1yjMJjAQ2eIthSXEW7DaBnIY1%40thread.tacv2/conversations?groupId=94156c13-59e5-47a4-b064-991f0d4f8d48&tenantId=6f15cd97-f6a7-41e3-b2c5-ad4193976476 Session 2 : Date: March 09, 2024 - Saturday Time: 03.00 PM - 05.00 PM Link to join: https://teams.microsoft.com/dl/launcher/launcher.html?url=%2F_%23%2Fl%2Fmeetup-join%2F19%3Ameeting_ODUzZjM4MGYtMTlmMi00MGFiLWI3MDItNzRiNzM4ODFiMjUy%40thread.v2%2F0%3Fcontext%3D%257b%2522Tid%2522%253a%25226f15cd97-f6a7-41e3-b2c5-ad4193976476%2522%252c%2522Oid%2522%253a%252227e02253-781f-4a25-b616-7161b05d1843%2522%257d%26anon%3Dtrue&type=meetup-join&deeplinkId=93291de2-f8e6-4b97-87a7-92ca80adee81&directDl=true&msLaunch=true&enableMobilePage=true&suppressPrompt=true Happy Learning. -NPTEL Team

Week 7 Feedback Form: Deep Learning - IIT Ropar!!

Dear learners, There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Date: March 05, 2024 - Tuesday Time:06.00 PM - 08.00 PM Link to join: https://meet.google.com/sup-fxrb-gbg Session 2 : Date: March 05, 2024 - Tuesday Time:06.00 PM - 08.00 PM Link to join: https://meet.google.com/upg-fjmi-fpy Happy Learning. -NPTEL Team

Deep Learning - IIT Ropar : Updated on Week 7 and week 8 Assignment !!

Dear Learners, The week-7 assignment is moved to week 8, as the assignment was supposed for week-8. The lecture videos of week 8 are also made live please refer to them. Lesson Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=107&lesson=108 Assignment Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=107&assessment=273 The assignment has to be submitted on or before Wednesday, [20/03/2024], 23:59 IST. As for week 7, a new assignment will be released shortly. Please make sure you are taking both the assignments. -NPTEL Team

Deep Learning - IIT Ropar :Week 7 updated Assignment live now!!

Dear Learners, Assignment-7 for Week-7 is also released and can be accessed from the following link Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=92&assessment=274 The assignment has to be submitted on or before Wednesday,[13/03/2024], 23:59 IST. Note : Please check the due date of the assignments in the announcement and assignment page if you see any mismatch write to us immediately. Thanks and Regards, -NPTEL Team

Deep Learning - IIT Ropar :Week 7 content & Assignment live now!!

Dear Learners, The lecture videos for Week 7 have been uploaded for the course "Deep Learning - IIT Ropar ". The lectures can be accessed using the following link: Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=92&lesson=93 The other lectures in this week are accessible from the navigation bar to the left. Please remember to login into the website to view contents (if you aren't logged in already). Assignment-7 for Week-7 is also released and can be accessed from the following link Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=92&assessment=273 The assignment has to be submitted on or before Wednesday,[13/03/2024], 23:59 IST. As we have done so far, please use the discussion forums if you have any questions on this module. Note : Please check the due date of the assignments in the announcement and assignment page if you see any mismatch write to us immediately. Thanks and Regards, -NPTEL Team

Dear learners, There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Date: Mar 02, 2024 - Friday Time:03.00 PM - 05.00 PM Link to join: https://teams.microsoft.com/l/team/19%3AB9WpkGPrx_BJn0X-AnT1yjMJjAQ2eIthSXEW7DaBnIY1%40thread.tacv2/conversations?groupId=94156c13-59e5-47a4-b064-991f0d4f8d48&tenantId=6f15cd97-f6a7-41e3-b2c5-ad4193976476 Session 2 : Date: Mar 02, 2024 - Friday Time: 03.00 PM - 05.00 PM Link to join: https://teams.microsoft.com/dl/launcher/launcher.html?url=%2F_%23%2Fl%2Fmeetup-join%2F19%3Ameeting_ODUzZjM4MGYtMTlmMi00MGFiLWI3MDItNzRiNzM4ODFiMjUy%40thread.v2%2F0%3Fcontext%3D%257b%2522Tid%2522%253a%25226f15cd97-f6a7-41e3-b2c5-ad4193976476%2522%252c%2522Oid%2522%253a%252227e02253-781f-4a25-b616-7161b05d1843%2522%257d%26anon%3Dtrue&type=meetup-join&deeplinkId=93291de2-f8e6-4b97-87a7-92ca80adee81&directDl=true&msLaunch=true&enableMobilePage=true&suppressPrompt=true Happy Learning. -NPTEL Team

Week 6 Feedback Form: Deep Learning - IIT Ropar!!

Dear learners, There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Date: Feb 27, 2024 - Tuesday Time:06.00 PM - 08.00 PM Link to join: https://meet.google.com/sup-fxrb-gbg Session 2 : Date: Feb 27, 2024 - Tuesday Time:06.00 PM - 08.00 PM Link to join: https://meet.google.com/upg-fjmi-fpy Happy Learning. -NPTEL Team

Deep Learning - IIT Ropar: Assignment 3 and 4 Solutions Released!!

Dear Learners, The Solutions of Week 3 and Week 4 for the course "Deep Learning - IIT Ropar" have been released in the portal. Please go through the solution and in case of any doubt post your queries in the forum. Assignment 3 Solution Link: https://storage.googleapis.com/swayam-node1-production.appspot.com/assets/pdf/noc24_cs59/week3.pdf Assignment 4 Solution Link: https://storage.googleapis.com/swayam-node1-production.appspot.com/assets/img/noc24_cs59/week4%20(1).pdf Happy Learning! Thanks & Regards, NPTEL Team

NPTEL: Exam Registration date is extended for 12 week courses of Jan 2024!

- No further extension will be provided.

- This extension is only applicable for 12-week courses.

Deep Learning - IIT Ropar :Week 6 content & Assignment live now!!

Dear Learners, The lecture videos for Week 6 have been uploaded for the course " Deep Learning - IIT Ropar". The lectures can be accessed using the following link: Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=83&lesson=84 The other lectures in this week are accessible from the navigation bar to the left. Please remember to login into the website to view contents (if you aren't logged in already). Assignment-6 for Week-6 is also released and can be accessed from the following link Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=83&assessment=271 The assignment has to be submitted on or before Wednesday,[06/03/2024], 23:59 IST. As we have done so far, please use the discussion forums if you have any questions on this module. Note : Please check the due date of the assignments in the announcement and assignment page if you see any mismatch write to us immediately. Thanks and Regards, -NPTEL Team

Dear learners, There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Date: Feb 24, 2024 - Saturday Time:03.00 PM - 05.00 PM Link to join: https://teams.microsoft.com/l/team/19%3AB9WpkGPrx_BJn0X-AnT1yjMJjAQ2eIthSXEW7DaBnIY1%40thread.tacv2/conversations?groupId=94156c13-59e5-47a4-b064-991f0d4f8d48&tenantId=6f15cd97-f6a7-41e3-b2c5-ad4193976476 Session 2 : Date: Feb 24, 2024 - Saturday Time:03.00 PM - 05.00 PM Link to join: https://teams.microsoft.com/dl/launcher/launcher.html?url=%2F_%23%2Fl%2Fmeetup-join%2F19%3Ameeting_ODUzZjM4MGYtMTlmMi00MGFiLWI3MDItNzRiNzM4ODFiMjUy%40thread.v2%2F0%3Fcontext%3D%257b%2522Tid%2522%253a%25226f15cd97-f6a7-41e3-b2c5-ad4193976476%2522%252c%2522Oid%2522%253a%252227e02253-781f-4a25-b616-7161b05d1843%2522%257d%26anon%3Dtrue&type=meetup-join&deeplinkId=93291de2-f8e6-4b97-87a7-92ca80adee81&directDl=true&msLaunch=true&enableMobilePage=true&suppressPrompt=true Happy Learning. -NPTEL Team

Deep Learning - IIT Ropar : Assignment 3 Reevaluations!!

Dear Learners, Assignment 3 submission of all students has been reevaluated by making the weightage as 0 for Question 6 & 7. Students are requested to find their revised scores of Assignment 3 on the Progress page. -NPTEL Team

Week 5 Feedback Form: Deep Learning - IIT Ropar!!

Reminder: nptel: exam registration is date is extended for jan 2024 courses.

Dear Learner, The exam registration for the Jan 2024 NPTEL course certification exam is extended till February 23, 2024 - 05.00 P.M . CLICK HERE to register for the exam Choose from the Cities where exam will be conducted: Exam Cities Click here to view Timeline and Guideline : Guideline For further details on registration process please refer the previous announcement in the course page. -NPTEL Team

Dear learners, There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Date: Feb 20, 2024 - Tuesday Time: 06.00 PM - 08.00 PM Link to join: https://meet.google.com/sup-fxrb-gbg Session 2 : Date: Feb 20, 2024 - Tuesday Time: 06.00 PM - 08.00 PM Link to join: https://meet.google.com/upg-fjmi-fpy Happy Learning. -NPTEL Team

Dear learners, There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Date: Feb 17, 2024 - Saturday Time:03.00 PM - 05.00 PM Link to join: https://teams.microsoft.com/l/team/19%3AB9WpkGPrx_BJn0X-AnT1yjMJjAQ2eIthSXEW7DaBnIY1%40thread.tacv2/conversations?groupId=94156c13-59e5-47a4-b064-991f0d4f8d48&tenantId=6f15cd97-f6a7-41e3-b2c5-ad4193976476 Session 2 : Date: Feb 17, 2024 - Saturday Time:03.00 PM - 05.00 PM Link to join: https://teams.microsoft.com/dl/launcher/launcher.html?url=%2F_%23%2Fl%2Fmeetup-join%2F19%3Ameeting_ODUzZjM4MGYtMTlmMi00MGFiLWI3MDItNzRiNzM4ODFiMjUy%40thread.v2%2F0%3Fcontext%3D%257b%2522Tid%2522%253a%25226f15cd97-f6a7-41e3-b2c5-ad4193976476%2522%252c%2522Oid%2522%253a%252227e02253-781f-4a25-b616-7161b05d1843%2522%257d%26anon%3Dtrue&type=meetup-join&deeplinkId=93291de2-f8e6-4b97-87a7-92ca80adee81&directDl=true&msLaunch=true&enableMobilePage=true&suppressPrompt=true Happy Learning. -NPTEL Team

Deep Learning - IIT Ropar :Week 5 content & Assignment live now!!

Dear Learners, The lecture videos for Week 5 have been uploaded for the course "Deep Learning - IIT Ropar" . The lectures can be accessed using the following link: Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=71&lesson=72 The other lectures in this week are accessible from the navigation bar to the left. Please remember to login into the website to view contents (if you aren't logged in already). Assignment-5 for Week-5 is also released and can be accessed from the following link Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=71&assessment=268 The assignment has to be submitted on or before Wednesday,[28/02/2024], 23:59 IST. As we have done so far, please use the discussion forums if you have any questions on this module. Note : Please check the due date of the assignments in the announcement and assignment page if you see any mismatch write to us immediately. Thanks and Regards, -NPTEL Team

Dear Learner, The exam registration for the Jan 2024 NPTEL course certification exam is extended till February 20, 2024 - 05.00 P.M . CLICK HERE to register for the exam Choose from the Cities where exam will be conducted: Exam Cities Click here to view Timeline and Guideline : Guideline For further details on registration process please refer the previous announcement in the course page. -NPTEL Team

Week 4 Feedback Form: Deep Learning - IIT Ropar!!

Deep learning - iit ropar : week 4 content & assignment live now.

Dear Learners, The lecture videos for Week 4 have been uploaded for the course " Deep Learning - IIT Ropar" . The lectures can be accessed using the following link: Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=59&lesson=60 The other lectures in this week are accessible from the navigation bar to the left. Please remember to login into the website to view contents (if you aren't logged in already). Practice Assignment-4 for Week-4 i s also released and can be accessed from the following link Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=59&assessment=261 Assignment-4 for Week-4 is also released and can be accessed from the following link Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=59&assessment=266 The assignment has to be submitted on or before Wednesday,[21/02/2024], 23:59 IST. As we have done so far, please use the discussion forums if you have any questions on this module. Note : Please check the due date of the assignments in the announcement and assignment page if you see any mismatch write to us immediately. Thanks and Regards, -NPTEL Team

Dear learners, There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Date: Feb 13, 2024 - Tuesday Time:06.00 PM - 08.00 PM Link to join: https://meet.google.com/sup-fxrb-gbg Session 2 : Date: Feb 13, 2024 - Tuesday Time:06.00 PM - 08.00 PM Link to join: https://meet.google.com/upg-fjmi-fpy Happy Learning. -NPTEL Team

Deep Learning - IIT Ropar: Assignment 1 and 2 Solutions Released!!

Dear Learners, The Solutions of Week 1 and Week 2 for the course " Deep Learning - IIT Ropar " have been released in the portal. Please go through the solution and in case of any doubt post your queries in the forum. Assignment 1 Solution Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=17&lesson=245 Assignment 2 Solution Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=36&lesson=246 Happy Learning! Thanks & Regards, NPTEL Team

Dear learners, There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Date: Feb 10, 2024 - Saturday Time:03.00 PM - 05.00 PM Link to join: https://teams.microsoft.com/l/team/19%3AB9WpkGPrx_BJn0X-AnT1yjMJjAQ2eIthSXEW7DaBnIY1%40thread.tacv2/conversations?groupId=94156c13-59e5-47a4-b064-991f0d4f8d48&tenantId=6f15cd97-f6a7-41e3-b2c5-ad4193976476 Session 2 : Date: Feb 10, 2024 - Saturday Time:03.00 PM - 05.00 PM Link to join: https://teams.microsoft.com/dl/launcher/launcher.html?url=%2F_%23%2Fl%2Fmeetup-join%2F19%3Ameeting_ODUzZjM4MGYtMTlmMi00MGFiLWI3MDItNzRiNzM4ODFiMjUy%40thread.v2%2F0%3Fcontext%3D%257b%2522Tid%2522%253a%25226f15cd97-f6a7-41e3-b2c5-ad4193976476%2522%252c%2522Oid%2522%253a%252227e02253-781f-4a25-b616-7161b05d1843%2522%257d%26anon%3Dtrue&type=meetup-join&deeplinkId=93291de2-f8e6-4b97-87a7-92ca80adee81&directDl=true&msLaunch=true&enableMobilePage=true&suppressPrompt=true Happy Learning. -NPTEL Team

Deep Learning - IIT Ropar: Reminder for Assignment 1 & 2 deadline!!

Dear Learners, The Deadline for Assignments 1 & 2 will close on Wednesday,[07/02/2024], 23:59 IST. Kindly submit the assignments before the deadline. Thanks and Regards, -NPTEL Team

Week 3 Feedback Form: Deep Learning - IIT Ropar!!

Dear learners, There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Date: Feb 06, 2024 - Tuesday Time:06.00 PM - 08.00 PM Link to join: https://meet.google.com/sup-fxrb-gbg Session 2 : Date: Feb 06, 2024 - Tuesday Time:06.00 PM - 08.00 PM Link to join: https://meet.google.com/upg-fjmi-fpy Happy Learning. -NPTEL Team

Deep Learning - IIT Ropar - Problem Solving Session Recording is available!!

Dear Learner, We have uploaded the Recorded videos of the Live Interaction Session - Problem solving Session of Week 1 . Videos are uploaded inside the Separate Unit called " Problem solving Session " along with the slides used wherever applicable. Login to the course on swayam.gov.in to check the same. -NPTEL Team

Dear learners, There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Date: Feb 03, 2024 - Saturday Time:03.00 PM - 05.00 PM Link to join: https://teams.microsoft.com/l/team/19%3AB9WpkGPrx_BJn0X-AnT1yjMJjAQ2eIthSXEW7DaBnIY1%40thread.tacv2/conversations?groupId=94156c13-59e5-47a4-b064-991f0d4f8d48&tenantId=6f15cd97-f6a7-41e3-b2c5-ad4193976476 Session 2 : Date: Feb 03, 2024 - Saturday Time:03.00 PM - 05.00 PM Link to join: https://teams.microsoft.com/dl/launcher/launcher.html?url=%2F_%23%2Fl%2Fmeetup-join%2F19%3Ameeting_ODUzZjM4MGYtMTlmMi00MGFiLWI3MDItNzRiNzM4ODFiMjUy%40thread.v2%2F0%3Fcontext%3D%257b%2522Tid%2522%253a%25226f15cd97-f6a7-41e3-b2c5-ad4193976476%2522%252c%2522Oid%2522%253a%252227e02253-781f-4a25-b616-7161b05d1843%2522%257d%26anon%3Dtrue&type=meetup-join&deeplinkId=93291de2-f8e6-4b97-87a7-92ca80adee81&directDl=true&msLaunch=true&enableMobilePage=true&suppressPrompt=true Happy Learning. -NPTEL Team

Deep Learning - IIT Ropar : Week 3 content & Assignment is live now !!

Dear Learners, The lecture videos for Week 3 have been uploaded for the course " Deep Learning - IIT Ropar " . The lectures can be accessed using the following link: Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=46&lesson=47 The other lectures in this week are accessible from the navigation bar to the left. Please remember to login into the website to view contents (if you aren't logged in already). Assignment-3 for Week-3 is also released and can be accessed from the following link Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=46&assessment=265 The assignment has to be submitted on or before Wednesday,[14/02/2024], 23:59 IST. As we have done so far, please use the discussion forums if you have any questions on this module. Note : Please check the due date of the assignments in the announcement and assignment page if you see any mismatch write to us immediately. Thanks and Regards, -NPTEL Team

Reminder: NPTEL: Exam Registration is open now for Jan 2024 courses!

Dear Learner,

Here is the much-awaited announcement on registering for the Jan 2024 NPTEL course certification exam.

1. The registration for the certification exam is open only to those learners who have enrolled in the course.

2. If you want to register for the exam for this course, login here using the same email id which you had used to enroll to the course in Swayam portal. Please note that Assignments submitted through the exam registered email id ALONE will be taken into consideration towards final consolidated score & certification.

3 . Date of exam: Apr 28, 2024

CLICK HERE to register for the exam.

Choose from the Cities where exam will be conducted: Exam Cities

4. Exam fees:

If you register for the exam and pay before Feb 12, 2024 - 5:00 PM, Exam fees will be Rs. 1000/- per exam .

5. 50% fee waiver for the following categories:

Students belonging to the SC/ST category: please select Yes for the SC/ST option and upload the correct Community certificate.

Students belonging to the PwD category with more than 40% disability: please select Yes for the option and upload the relevant Disability certificate.

6. Last date for exam registration: Feb 16, 2024 - 5:00 PM (Friday).

7. Between Feb 12, 2024 - 5:00 PM & Feb 16, 2024 - 5:00 PM late fee will be applicable.

8. Mode of payment: Online payment - debit card/credit card/net banking/UPI.

9. HALL TICKET:

The hall ticket will be available for download tentatively by 2 weeks prior to the exam date. We will confirm the same through an announcement once it is published.

10. FOR CANDIDATES WHO WOULD LIKE TO WRITE MORE THAN 1 COURSE EXAM:- you can add or delete courses and pay separately – till the date when the exam form closes. Same day of exam – you can write exams for 2 courses in the 2 sessions. Same exam center will be allocated for both the sessions.

11. Data changes:

Last date for data changes: Feb 16, 2024 - 5:00 PM :

We will charge an additional fee of Rs. 200 to make any changes related to name, DOB, photo, signature, SC/ST and PWD certificates after the last date of data changes.

The following 6 fields can be changed (until the form closes) ONLY when there are NO courses in the course cart. And you will be able to edit those fields only if you: -

REMOVE unpaid courses from the cart And/or - CANCEL paid courses

1. Do you come under the SC/ST category? *

2. SC/ST Proof

3. Are you a person with disabilities? *

4. Are you a person with disabilities above 40%?

5. Disabilities Proof

6. What is your role?

Note: Once you remove or cancel a course, you will be able to edit these fields immediately.

But, for cancelled courses, refund of fees will be initiated only after 2 weeks.

12. LAST DATE FOR CANCELLING EXAMS and getting a refund: Feb 16, 2024 - 5:00 PM

13. Click here to view Timeline and Guideline : Guideline

Domain Certification

Domain Certification helps learners to gain expertise in a specific Area/Domain. This can be helpful for learners who wish to work in a particular area as part of their job or research or for those appearing for some competitive exam or becoming job ready or specialising in an area of study.

Every domain will comprise Core courses and Elective courses. Once a learner completes the requisite courses as per the mentioned criteria, you will receive a Domain Certificate showcasing your scores and the domain of expertise. Kindly refer to the following link for the list of courses available under each domain: https://nptel.ac.in/domains

Outside India Candidates

Candidates who are residing outside India may also fill the exam form and pay the fees. Mode of exam and other details will be communicated to you separately.

Thanks & Regards,

Week 2 Feedback Form: Deep Learning - IIT Ropar!!

Dear learners, There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Session 1 : Date: Jan 30, 2024 - Tuesday Time:06.00 PM - 08.00 PM Link to join: https://meet.google.com/sup-fxrb-gbg Session 2 : Date: Jan 30, 2024 - Tuesday Time:06.00 PM - 08.00 PM Link to join: https://meet.google.com/upg-fjmi-fpy Happy Learning. -NPTEL Team

Dear learners, There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Session 1 : Date: Jan 27, 2024 - Saturday Time:03.00 PM - 05.00 PM Link to join: https://teams.microsoft.com/l/team/19%3AB9WpkGPrx_BJn0X-AnT1yjMJjAQ2eIthSXEW7DaBnIY1%40thread.tacv2/conversations?groupId=94156c13-59e5-47a4-b064-991f0d4f8d48&tenantId=6f15cd97-f6a7-41e3-b2c5-ad4193976476 Session 2 : Date: Jan 27, 2024 - Saturday Time:03.00 PM - 05.00 PM Link to join: https://teams.microsoft.com/dl/launcher/launcher.html?url=%2F_%23%2Fl%2Fmeetup-join%2F19%3Ameeting_ODUzZjM4MGYtMTlmMi00MGFiLWI3MDItNzRiNzM4ODFiMjUy%40thread.v2%2F0%3Fcontext%3D%257b%2522Tid%2522%253a%25226f15cd97-f6a7-41e3-b2c5-ad4193976476%2522%252c%2522Oid%2522%253a%252227e02253-781f-4a25-b616-7161b05d1843%2522%257d%26anon%3Dtrue&type=meetup-join&deeplinkId=93291de2-f8e6-4b97-87a7-92ca80adee81&directDl=true&msLaunch=true&enableMobilePage=true&suppressPrompt=true Happy Learning. -NPTEL Team

Deep Learning - IIT Ropar : Week 2 content & Assignment is live now !!

Dear Learners, The lecture videos for Week 2 have been uploaded for the course " Deep Learning - IIT Ropar " . The lectures can be accessed using the following link: Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=36&lesson=37 The other lectures in this week are accessible from the navigation bar to the left. Please remember to login into the website to view contents (if you aren't logged in already). Assignment-2 for Week-2 is also released and can be accessed from the following link Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=36&assessment=264 The assignment has to be submitted on or before Wednesday, [07/02/2024], 23:59 IST. As we have done so far, please use the discussion forums if you have any questions on this module. Note : Please check the due date of the assignments in the announcement and assignment page if you see any mismatch write to us immediately. Thanks and Regards, -NPTEL Team

Dear learners, There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Date: Jan 30, 2024 - Tuesday Time:06.00 PM - 08.00 PM Link to join: https://meet.google.com/sup-fxrb-gbg Session 2 : Date: Jan 27, 2024 - Saturday Time:03.00 PM - 05.00 PM Link to join: https://teams.microsoft.com/l/team/19%3AB9WpkGPrx_BJn0X-AnT1yjMJjAQ2eIthSXEW7DaBnIY1%40thread.tacv2/conversations?groupId=94156c13-59e5-47a4-b064-991f0d4f8d48&tenantId=6f15cd97-f6a7-41e3-b2c5-ad4193976476 Session 3 : Date: Jan 27, 2024 - Saturday Time:03.00 PM - 05.00 PM Link to join: https://teams.microsoft.com/dl/launcher/launcher.html?url=%2F_%23%2Fl%2Fmeetup-join%2F19%3Ameeting_ODUzZjM4MGYtMTlmMi00MGFiLWI3MDItNzRiNzM4ODFiMjUy%40thread.v2%2F0%3Fcontext%3D%257b%2522Tid%2522%253a%25226f15cd97-f6a7-41e3-b2c5-ad4193976476%2522%252c%2522Oid%2522%253a%252227e02253-781f-4a25-b616-7161b05d1843%2522%257d%26anon%3Dtrue&type=meetup-join&deeplinkId=93291de2-f8e6-4b97-87a7-92ca80adee81&directDl=true&msLaunch=true&enableMobilePage=true&suppressPrompt=true Session 4 : Date: Jan 30, 2024 - Tuesday Time:06.00 PM - 08.00 PM Link to join: https://meet.google.com/upg-fjmi-fpy Happy Learning. -NPTEL Team

Week 1 Feedback Form: Deep Learning - IIT Ropar!!

Deep learning - iit ropar : week 1 content & assignment is live now .

Dear Learners, The lecture videos for Week 1 have been uploaded for the course " Deep Learning - IIT Ropar " . The lectures can be accessed using the following link: Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=17&lesson=18 The other lectures in this week are accessible from the navigation bar to the left. Please remember to login into the website to view contents (if you aren't logged in already). Assignment-1 for Week-1 is also released and can be accessed from the following link Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=17&assessment=263 The assignment has to be submitted on or before Wednesday,[07/02/2024], 23:59 IST. As we have done so far, please use the discussion forums if you have any questions on this module. Note : Please check the due date of the assignments in the announcement and assignment page if you see any mismatch write to us immediately. Thanks and Regards, -NPTEL Team

Deep Learning - IIT Ropar: Week-1 video is live now !!

Dear Learners,

The lecture videos for Week 1 have been uploaded for the course “ Deep Learning - IIT Ropar ”. The lectures can be accessed using the following link.

Link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=17&lesson=18

The other lectures in this week are accessible from the navigation bar to the left. Please remember to login into the website to view contents (if you aren't logged in already).

Assignment will be released shortly.

As we have done so far, please use the discussion forums if you have any questions on this module.

Thanks and Regards,

-NPTEL Team

Deep Learning - IIT Ropar : Assignment 0 is live now!!

Dear Learners, We welcome you all to this course " Deep Learning - IIT Ropar ". The assignment 0 has been released. This assignment is based on a prerequisite of the course. You can find the assignment in the link: https://onlinecourses.nptel.ac.in/noc24_cs59/unit?unit=16&assessment=260 Please note that this assignment is for practice and it will not be graded. Thanks & Regards -NPTEL Team

NPTEL: Exam Registration is open now for Jan 2024 courses!

Deep learning - iit ropar: welcome to nptel online course - jan 2024.

- Every week, about 2.5 to 4 hours of videos containing content by the Course instructor will be released along with an assignment based on this. Please watch the lectures, follow the course regularly and submit all assessments and assignments before the due date. Your regular participation is vital for learning and doing well in the course. This will be done week on week through the duration of the course.

- Please do the assignments yourself and even if you take help, kindly try to learn from it. These assignments will help you prepare for the final exams. Plagiarism and violating the Honor Code will be taken very seriously if detected during the submission of assignments.

- The announcement group - will only have messages from course instructors and teaching assistants - regarding the lessons, assignments, exam registration, hall tickets, etc.

- The discussion forum (Ask a question tab on the portal) - is for everyone to ask questions and interact. Anyone who knows the answers can reply to anyone's post and the course instructor/TA will also respond to your queries.

- Please make maximum use of this feature as this will help you learn much better.

- If you have any questions regarding the exam, registration, hall tickets, results, queries related to the technical content in the lectures, any doubts in the assignments, etc can be posted in the forum section

- The course is free to enroll and learn from. But if you want a certificate, you have to register and write the proctored exam conducted by us in person at any of the designated exam centres.

- The exam is optional for a fee of Rs 1000/- (Rupees one thousand only).

- Date and Time of Exams: April 28, 2024 Morning session 9am to 12 noon; Afternoon Session 2 pm to 5 pm.

- Registration URL: Announcements will be made when the registration form is open for registrations.

- The online registration form has to be filled and the certification exam fee needs to be paid. More details will be made available when the exam registration form is published. If there are any changes, it will be mentioned then.

- Please check the form for more details on the cities where the exams will be held, the conditions you agree to when you fill the form etc.

- Once again, thanks for your interest in our online courses and certification. Happy learning.

A project of

In association with

Deep Learning | NPTEL | Week 4 answers

This set of MCQ(multiple choice questions) focuses on the Deep Learning NPTEL Week 4 answers

Course layout

Answers COMING SOON! Kindly Wait!

Week 1 : Assignment Answers Week 2: Assignment Answers Week 3: Assignment Answers Week 4: Assignment Answers Week 5: Assignment Answers Week 6: Assignment Answers Week 7: Assignment Answers Week 8: Assignment Answers Week 9: Assignment Answers Week 10: Assignment Answers Week 11: Assignment Answers Week 12: Assignment Answers

NOTE: You can check your answer immediately by clicking show answer button. This set of “ Deep Learning NPTEL Week 4 answers ” contains 10 questions.

Now, start attempting the quiz.

Deep Learning NPTEL 2023 Week 4 Quiz Solutions

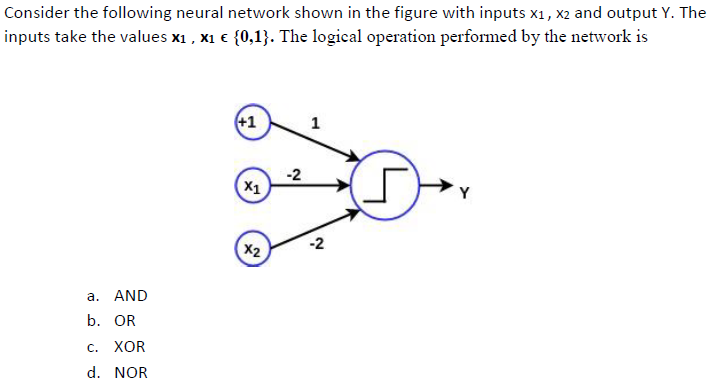

Q1. Which of the following cannot be realized with single layer perception (only input and output layer)?

a) AND b) OR c) NAND d) XOR

Answer: d) XOR

Q2. For a function f(θ 0 , θ 1 ), if θ 0 and θ 1 are initialized at a local minimum, then what should be the values of θ 0 and θ 1 after a single iteration of gradient descent:

a) θ 0 and θ 1 will update as per gradient descent rule b) θ 0 and θ 1 will remain same c) Depends on the values of θ 0 and θ 1 d) Depends onf the learning rate

Q3. Choose the correct option: i) Inability of a model to obtain sufficiently low training error is termed as overfitting ii) Inability of a model to reduce large margin between training and testing error is termed as Overfitting iii) Inability of a model to obtain sufficiently low training error is termed as Underfitting iv) Inability of a model to reduce large margin between training and testing error is termed as Underfitting

a) Only option (i) is correct b) Both options (ii) and (iii) are correct c) Both options (ii) and (iv) are correct d) Only option (iv) is correct

Deep Learning NPTEL week 4 Assignment Solutions

Q4. Suupose for a cost function J(θ) = 0.25θ 2 as shown in graph below, refer to this graph and choose the correct option regarding the Statements given below θ is plotted along horizontal axis.

a) Only Statement i is true b) Only Statement ii is true c) Both statement i and ii are true d) None of them are true

Q5. Choose the correct option. Gradient of a continuous and differentiable function is: i) is zero at a minimum ii) is non-zero at a maximum iii) is zero at a saddle point iv) magnitude decreases as you get closer to the minimum

a) Only option (i) is correct b) Options (i), (iii) and (iv) are correct c) Options (i) and (iv) are correct d) Only option (iii) is correct

Q6. Input to SoftMax activation function is [3,1,2]. What will be the output?

a) [0.58, 0.11, 0.31] b) [0.43, 0.24, 0.33] c) [0.60, 0.10, 0.30] d) [0.67, 0.09, 0.24]

Q7. If SoftMax if x f is denoted as σ(x i ) where x i is the j th element of the n-dimensional vector x i.e., X = [x i ,…,x j ,…,x n ], then derivate of σ(x i ) w.r.t. x i i.e., ƍσ(x i )/ƍx i is given by,

Q8. Which of the following options is true?

a) In Stochastic Gradient Descent, a small batch of sample is selected rawndomly instead of the whole data set for each iteration. Too large update of weight values leading to faster convergence. b) In Stochastic Gradient Descent, the whole data set is processed together for update in each iteration. c) Stochastic Gradient Descent considers only one sample for updates and has noiser updates. d) Stochastic Gradient Descent is a non-iterative process

Q9. What are the steps for using a gradient descent algorithm? 1. Calculate error between teh actual value and the predicted value 2. Re-iterate until you find best weights of network 3. Pass an input through the network and get values from output layer 4. Initialize random weight and bias 5. Go to each neurons which contributes to the error and change its respective values to reduce the error

a) 1, 2, 3, 4, 5 b) 5, 4, 3, 2, 1 c) 3, 2, 1, 5, 4 d) 4, 3, 1, 5, 2

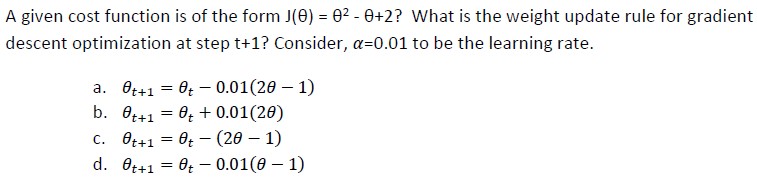

Q10. J(θ) = 2θ 2 – 2θ + 2 is a given cost function? Find the correct weight update rule for gradient descent optimixation at step t+1? Consider α=0.01 to be the learning rate

a) θ t+1 = θ t – 0.01(2θ – 1) b) θ t+1 = θ t + 0.01(2θ – 1) c) θ t+1 = θ t – (2θ – 1) d) θ t+1 = θ t – 0.02(2θ – 1)

Deep Learning NPTEL 2023 Week 4 answers

Q2. Which of the following activation function leads to sparse acitvation maps?

a) Sigmoid b) Tanh c) Linear d) ReLU

Q4. Which logic function cannot be performed using a single-layered Neural Network?

a) AND b) OR c) XOR d) All

Q5. Which of the following options closely relate to the following graph? Green cross are the samples of Classs-A while mustard rings are samples of Class-B and the red line is the separating line between the two class.

a) High Bias b) Zero Bias c) Zero Bias and High Variance d) Zero Bians and Zero Variance

Deep Learning NPTEL Week 4 Answers

Q6. Which of the following statement is true?

a) L2 regularization lead to sparse activation maps b) L1 regularization lead to sparse activation maps c) Some of the weights are squashed to zero in L2 regularization d) L2 regularization is also known as Lasso

Q7. Which among the following options give the range for a tanh function?

a) -1 to 1 b) -1 to 0 c) 0 to 1 d) 0 to infinity

Q9. When is gradient descent algorithm certain to find a global minima?

a) For convex cost plot b) For concave cost plot c) For union of 2 convex cost plot d) For union of 2 concave cost plot

Q10. Let X=[-1, 0, 3, 5] be the input of ith layer of a neural network. On this, we want to apply softmax function. What should be the output of it?

a) [0.368, 1, 20.09, 148,41] b) [0.002, 0.006, 0.118, 0.874] c) [0.3, 0.05, 0.6, 0.05] d) [0.04, 0, 0.06, 0.9]

<< Prev: Deep Learning NPTEL Week 3 Answers

>> Next: Deep Learning NPTEL Week 5 Answers

For discussion about any question, join the below comment section. And get the solution of your query. Also, try to share your thoughts about the topics covered in this particular quiz.

Related Posts

Operating system fundamentals | nptel | week 0 assignment 0 solution, nptel operating system fundamentals week 1 assignment solutions, nptel operating system fundamentals week 10 answers, nptel operating system fundamentals week 2 assignment solutions, nptel operating system fundamentals week 3 assignment solutions, nptel operating system fundamentals week 4 assignment solutions, 1 thought on “deep learning | nptel | week 4 answers”.

Is Q. no. 5 & 6 are correct?